Lets face it, most IT infrastructures today are more complex and distributed than ever before. It is not uncommon to run on-premise systems, usually in multiple data centers, one or more public cloud providers and SaaS, all to serve business functions.

No two systems are alike, no two cloud vendors can agree on a set of standard interfaces for availability and performance monitoring, so you are left with “shadow it” and “silos of systems”, each with their own management interfaces and monitoring services (if they have such offerings).

So how do you approach this? At Spearhead, we defined an MVP like method for IT monitoring specifically. We call it MVM and I’ve written about how to reduce the noise previously using this method. Nothing has changed, MVM is proving itself to be invaluable and the three step process to monitoring using the scientific method has also stood the test of time. They are simple by design because we all know the true enemy here is complexity. We consistently deliver faster and better results using the three step process and our MVM approach time and time again.

One of our latest projects required the migration of 1500 hosts to Checkmk from an open source Nagios solution to Checkmk Enterprise Edition. The migration is not what is important here, but the number of legacy custom checks that we needed to support while upgrading all of the agents to Checkmk. This was problematic, because root access to these systems was extremely difficult without many approvals and beyond the initial deploy of the vanilla Checkmk agent and a self-defined MRPE configuration, we we’re left without any access. While we waited, we were getting the output from the previous checks through Checkmk’s native MRPE, but we were unable to apply threshold modifications (amongst others) so operators would end-up disabling notifications.

So there we were, the system migrated, but using the old systems check plug-ins and without access to the systems so that we could update and it would take a couple of weeks for the paperwork to go through. Luckily Checkmk provides a Service Discovery mechanism that automatically detects services. There were almost 100k services, so taking them one by one would have taken some time,but luckily we could apply an MVM approach and using a rule we disabled specific services.

Disabling services is not done lightly, it may create a blind-spot, but we quickly identified that certain services were nothing more than process monitoring with fancy names. So the default Checkmk agent already covers process and does so with more more depth and precision. So we swapped one legacy check for a native Checkmk check.

Second, we noticed that many of the services that would generate a lot of noise we’re of no importance outside specific hours. We applied our best practices and defined time-periods that were relevant and further reduced the noise.

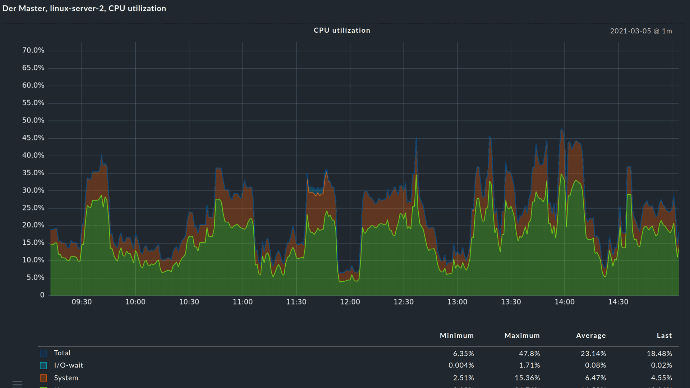

Lastly, we noticed checks that would complain about things outside of anyone’s control (a report that would need to run at a specific time would always pin the CPU to 100% utilization), so we disabled monitoring altogether during that time period for CPU.

These three simple steps took care of the what to monitor and when to monitor. We reduced from more than 1k alerts per day to less then 100 of which all were relevant and real. Two weeks later, we received access, deployed the agent, ran the register command and forgot about ever logging onto those systems again.

Checkmk is the only solution that we’ve seen that can let you describe your desired state via a rules engine. The rules engine is a powerful feature that can describe complex systems and their state in simple, easy to understand rules that then take care of fixing or alerting whenever something deviates from our desired state.